|

Bhuvan Sachdeva I'm a predoctoral research fellow at Sankara Eye Hospital and a visiting researcher at Microsoft Research, where I have had the pleasure to work with Dr. Mohit Jain, Dr. Vineeth N Balasubramanian, Prof. Thomas Schultz, Dr Kaushik Murali and Dr Maximilian W.M. Wintergerst.

I work at the intersection of ML, vision, and healthcare. Broadly, I have worked in 1) understanding the dynamics of task-transfer in vision-language models, 2) improving surgical workflow analysis and enabling automated complication detection in cataract surgery and 3) development of expert-in-the-loop chatbots for patients and healthcare workers. |

|

ResearchI'm interested in enhancing generalization and out-of-domain performance in foundation models through knowledge transfer across tasks and data-efficient adaptation. I am also passionate about applying machine learning and computer vision techniques to address challenges in healthcare. |

|

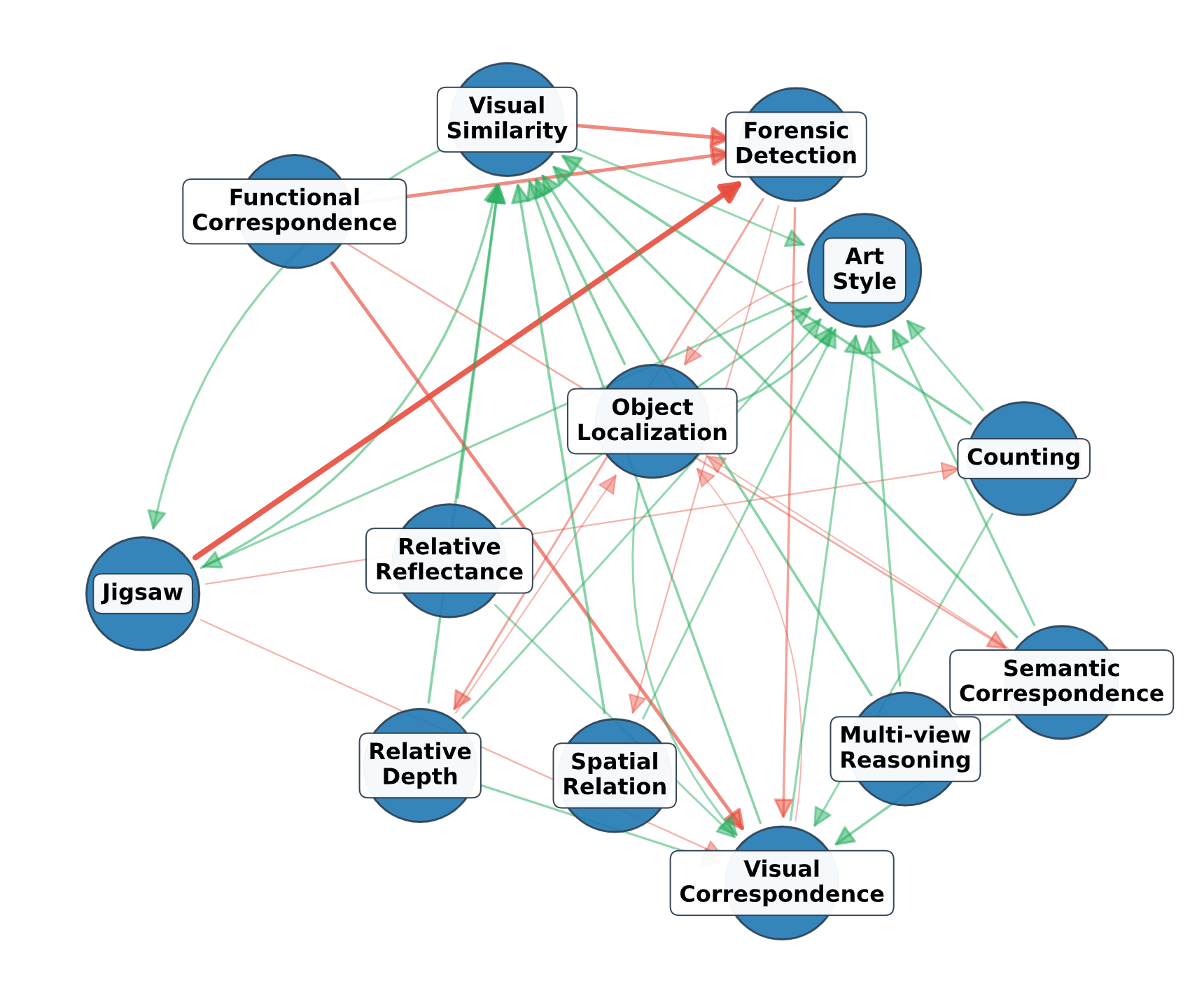

Understanding Task Transfer in Vision-Language Models

Bhuvan Sachdeva*, Karan Uppal*, Abhinav Java*, Vineeth N. Balasubramanian CVPR'26 | Unireps Workshop @ Neurips'25 project / arxiv In this work, we investigate how finetuning on one task affects zero-shot performance on others. We finetune the Qwen-2.5-VL model family (3B, 7B, and 32B) across 13 perception tasks from the BLINK Benchmark. We introduce the Perfection Gap Factor (PGF) to better characterize task-to-task transfer. Our findings reveal intrinsic task transfer patterns and further show the role of model size in shaping these shared representations. Finally, we leverage these task interactions to identify effective proxy datasets when target-task data is unavailable, demonstrating the practical utility of our framework. |

|

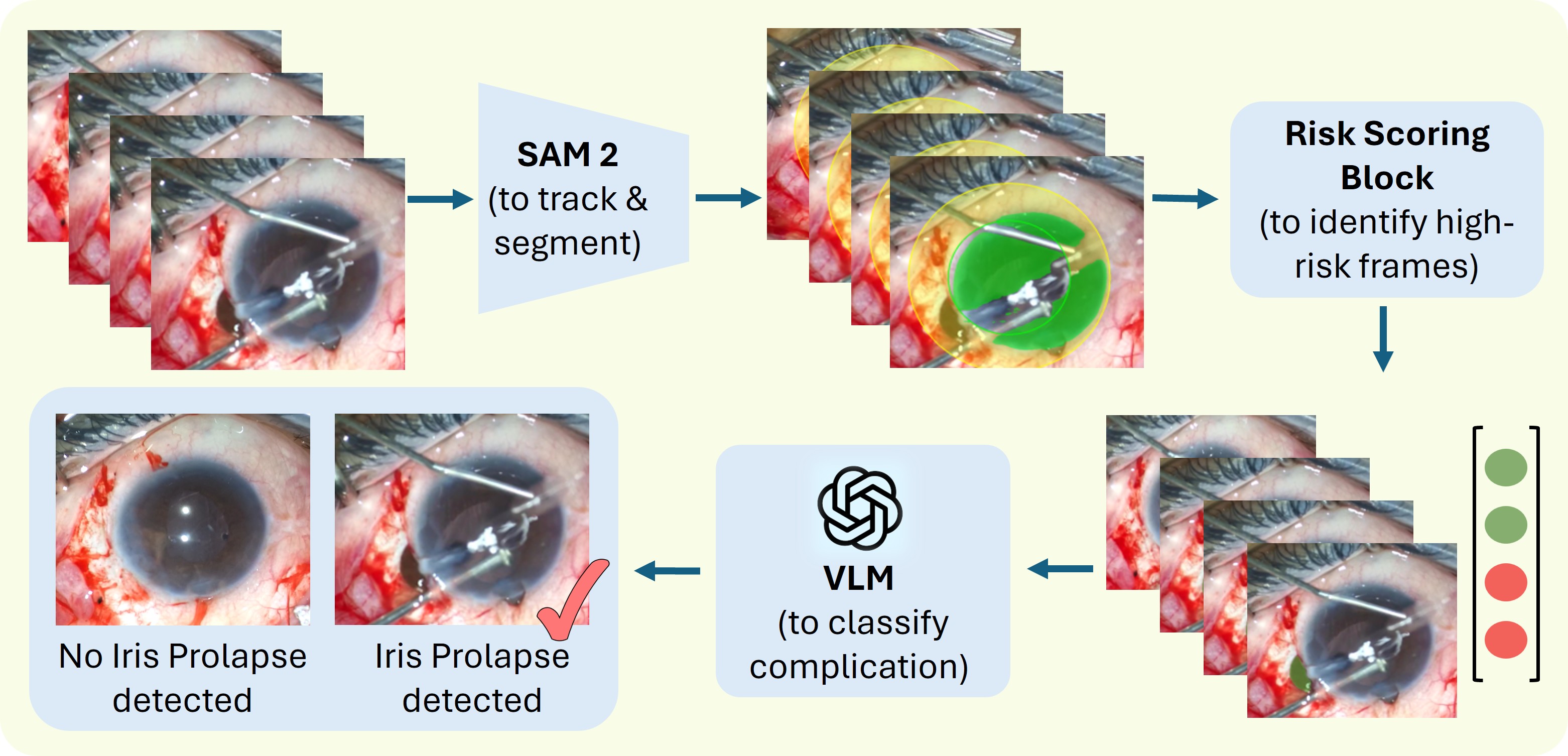

CataractCompDetect: Intraoperative Complication Detection in Cataract Surgery

Bhuvan Sachdeva, Sneha Kumari, Rudransh Agarwal, Shalaka Kumaraswamy, Niharika singri prasad, Simon Mueller, Raphael Lechtenboehmer, Maximilian W. M. Wintergerst, Thomas Schultz, Kaushik Murali, Mohit Jain Under submission arxiv We propose CataractCompDetect, the first framework for intra-operative complication detection in cataract surgery. Using SAM-2 for object tracking and a complication-specific risk-scoring module, the system detects high-risk segments for VLM-based reasoning. To enable the evaluation of our framework, we also release CataComp, the first dataset annotated for three intra-operative complications (iris prolapse, PCR, and vitreous loss) in cataract surgery. |

|

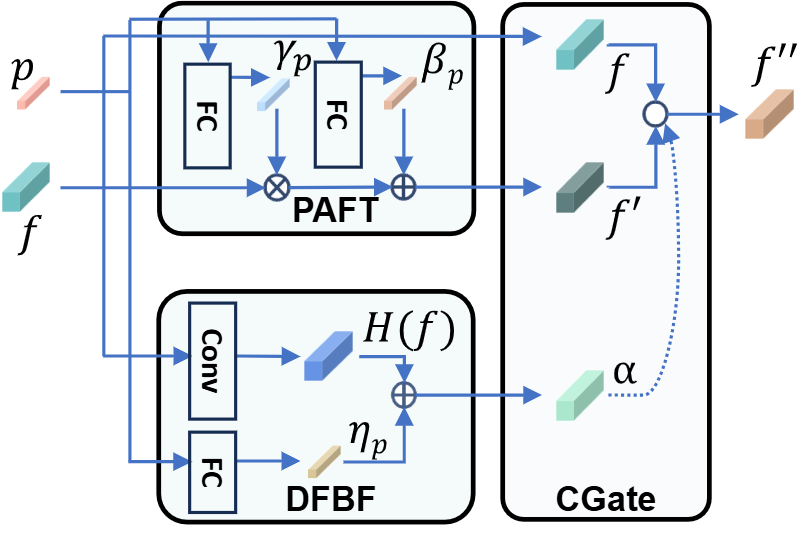

Phase-Informed Tool Segmentation for Manual Small-Incision Cataract Surgery

Bhuvan Sachdeva*, Naren Akash*, Tajamul Ashraf, Simon Müller, Thomas Schultz, Maximilian W.M. Wintergerst, Niharika Singri Prasad, Kaushik Murali, Mohit Jain MICCAI'25 github / arxiv We present the first comprehensive dataset for MSICS cataract surgery tool segmentation and introduce a novel phase-informed tool segmentation method, ToolSeg, which leverages surgical phases to enhance tool segmentation accuracy. |

|

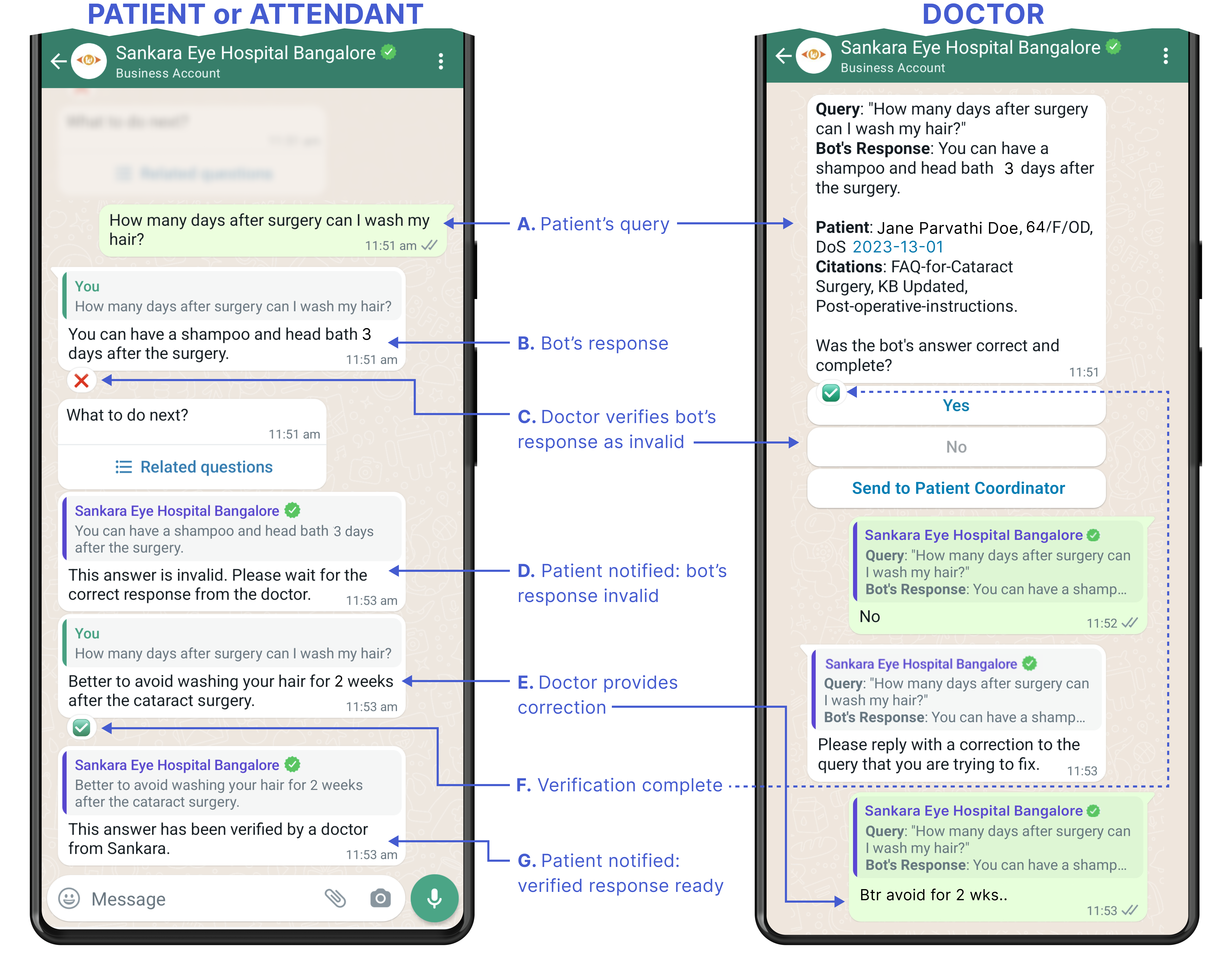

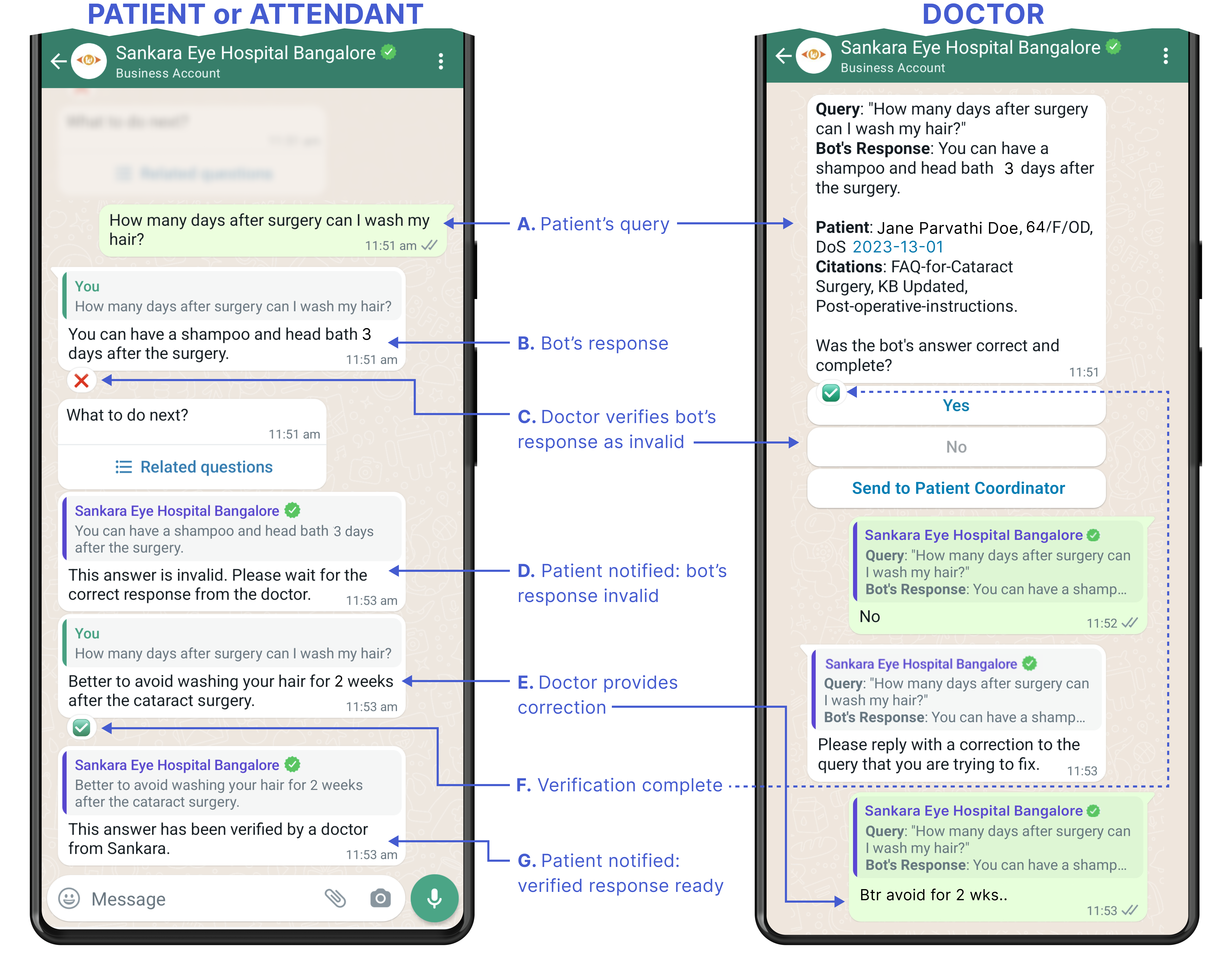

Learnings from a Large-Scale Deployment of an LLM-Powered Expert-in-the-Loop Healthcare Chatbot

Bhuvan Sachdeva*, Pragnya Ramjee*, Geeta Fulari, Kaushik Murali, Mohit Jain European Journal of Ophthalmology (EJO)'25 github / arxiv We present findings from a 24-week large-scale deployment of CataractBot involving 318 patients and attendants who sent nearly 2,000 messages. Our analysis revealed that medical questions significantly outnumbered logistical ones, with experts rating 84.52% of medical answers as accurate and negligible hallucinations. As the knowledge base expanded with expert corrections, system performance improved by 19.02%, reducing expert workload. These insights provide valuable guidance for designing future LLM-powered healthcare chatbots. |

|

CataractBot: an LLM-powered expert-in-the-loop chatbot for cataract patients

Pragnya Ramjee*, Bhuvan Sachdeva*, Satvik Golechha, Shreyas Kulkarni, Geeta Fulari, Kaushik Murali, Mohit Jain ACM IMWUT/ UbiComp'25 github / project / arxiv We developed CataractBot, an LLM-powered chatbot that answers cataract surgery related questions by querying a curated knowledge base and providing expert-verified responses. The system offers multimodal and multilingual capabilities to address the information gap between patients and healthcare providers. In our deployment study with 49 patients and attendants, 4 doctors, and 2 patient coordinators, CataractBot demonstrated potential in providing reliable, accessible information about cataract surgery. |

|

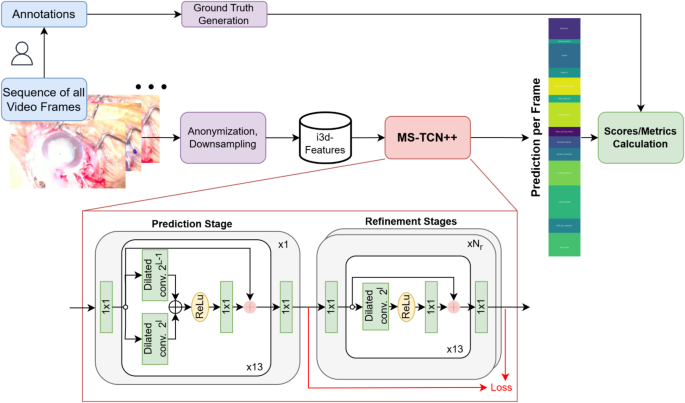

Phase recognition in manual Small-Incision cataract surgery with MS-TCN++ on the novel SICS-105 dataset

Simon Müller, Bhuvan Sachdeva, Singri Niharika Prasad, Raphael Lechtenboehmer, Frank G Holz, Robert P Finger, Kaushik Murali, Mohit Jain, Maximilian WM Wintergerst, Thomas Schultz Nature Scientific Reports'25 This study introduces the first SICS video dataset (SICS-105) and evaluates deep learning-based surgical phase recognition using the MS-TCN++ architecture. We compare performance between SICS and phacoemulsification procedures, showing that while phase recognition achieves 85.56% accuracy for SICS, it performs better on the standard phacoemulsification dataset (89.97%). |

|

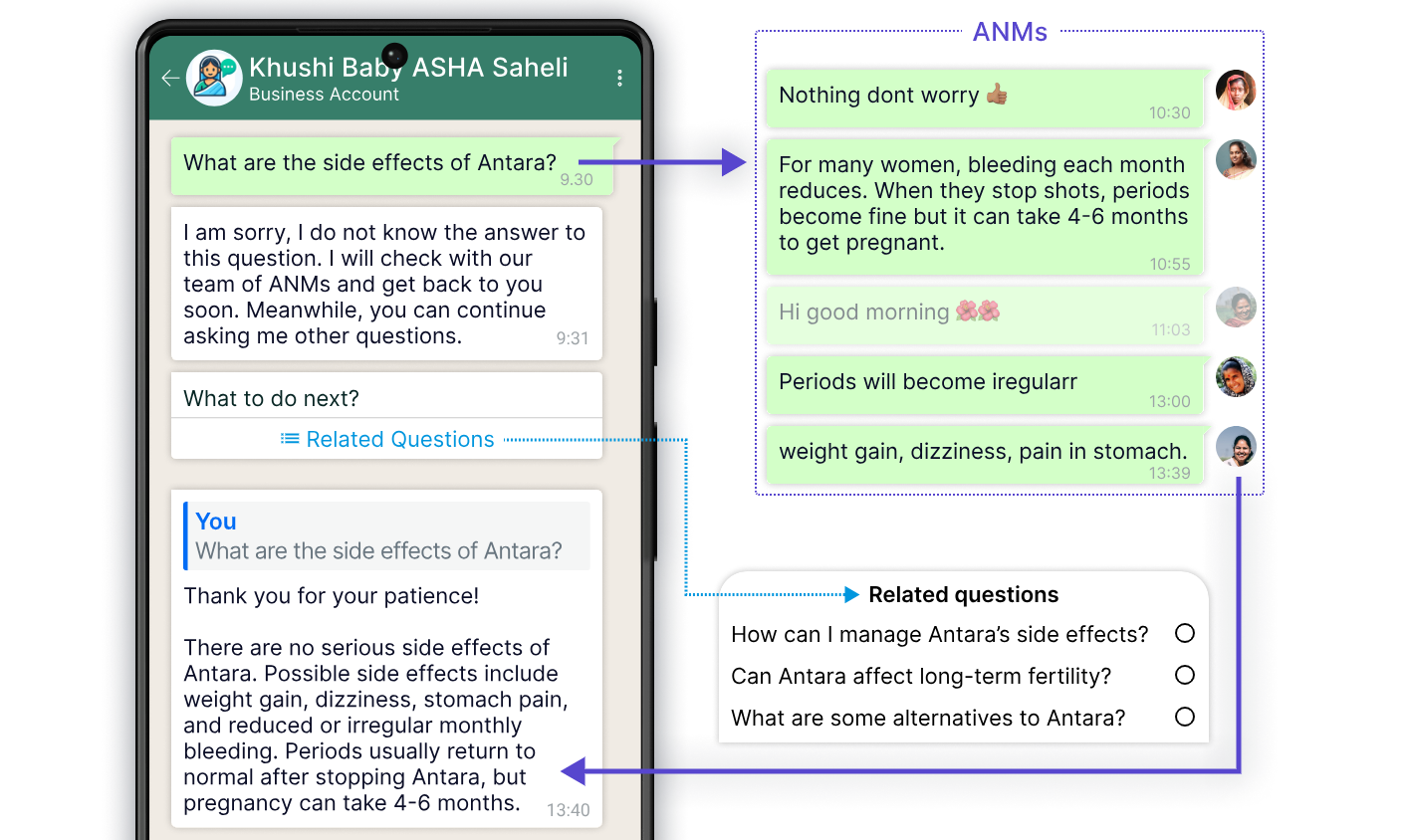

ASHABot: an LLM-powered chatbot to support the informational needs of community health workers

Pragnya Ramjee, Mehak Chhokar, Bhuvan Sachdeva, Mahendra Meena, Hamid Abdullah, Aditya Vashistha, Ruchit Nagar, Mohit Jain CHI'24 github / project / arxiv In this paper, we introduce ASHABot, an LLM-powered WhatsApp chatbot with experts-in-the-loop designed to support community health workers (CHWs) in India. Our study found that ASHABot provided CHWs with a private channel for asking basic and sensitive questions they hesitated to ask supervisors, while establishing itself as a trusted information source. Supervisors contributed knowledge to the system but expressed concerns about workload and accountability. |

Work Experience |

|

|

Sankara Eye Hospital & Microsoft Research, India | Research Fellow | (August 2023 - Present) |

|

|

Kroop AI | Research Intern | (Sept 2022 - Mar 2023) |

|

|

Prime Video, Amazon | Applied Scientist Intern | (May 2022 - July 2022) |

|

|

Kroop AI | Research Intern | (June 2021 - Apr 2022) |

|

Website adapted from Jon Barron's website. |